The AI Leadership Divide: Why 20% of Companies Capture 74% of the Value

The number that should keep you up at night

PwC surveyed 1,217 senior executives across 25 sectors for its 2026 AI Performance Study. One finding should reframe every AI conversation in your organization: 74% of AI's economic value is being captured by just 20% of companies.

Not 74% of the hype. Seventy-four percent of measured economic value -- actual revenue, actual margins -- flowing to one-fifth of the organizations trying to capture it.

The top performers generate 7.2 times more AI-driven value than everyone else, with profit margins nearly four percentage points higher. Meanwhile, 56% of CEOs report zero measurable financial returns from their AI investments. Some portion of that 56% likely reflects measurement gaps rather than genuine zero returns -- but even accounting for measurement failure, the concentration of value at the top is stark.

Your instinct, reading those numbers, is to ask: "Which model are they using? What technology stack are they running?"

That instinct is wrong. The gap is organizational, not technical. The top 20% aren't winning because they picked a better model -- they're winning because they reorganized decision-making and built the discipline to kill pilots fast. The evidence points consistently in one direction.

Strategic intent versus defensive deployment

The PwC data tells a clear story about what separates the top 20% from everyone else -- and it isn't their technology choices.

AI leaders are 2.6 times more likely to say AI has improved their ability to reinvent their business model. They pursue cross-industry convergence -- using AI to expand beyond traditional sector boundaries -- which PwC identifies as the single strongest predictor of AI-driven financial performance. They increase autonomous decision-making at 2.8 times the rate of peers while simultaneously investing more heavily in governance.

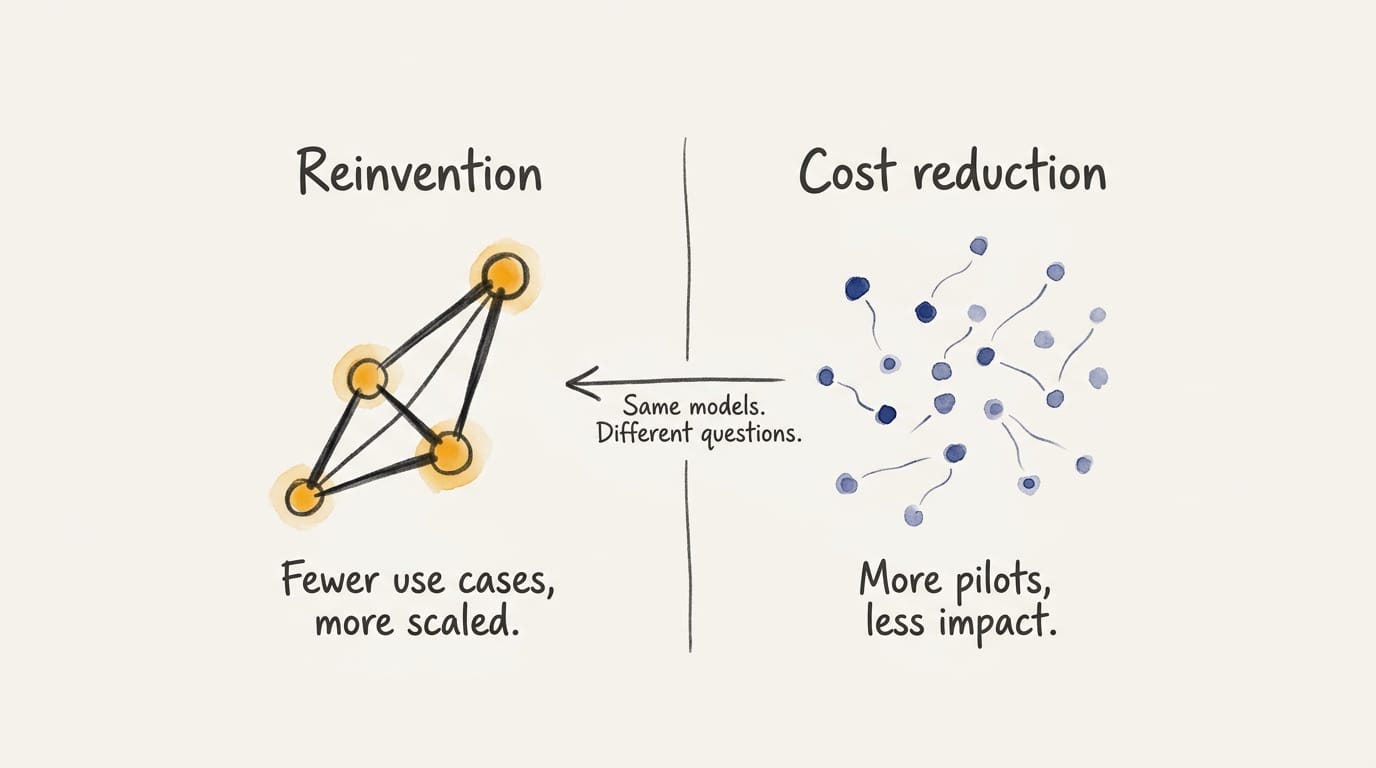

The laggards? Cost reduction. Productivity pilots. Maybe some process optimization if they're feeling ambitious. They're running the same models as the leaders -- they're just asking smaller questions.

BCG's research lands in the same place from a different direction. Their cohort of "future-built" firms -- roughly 5% of the market -- post 1.7 times revenue growth and 3.6 times higher three-year total shareholder return. IDC's numbers are even more pointed: the top 22% of AI adopters achieve 2.84 times ROI, while laggards average 0.84 times. They're not breaking even. They're losing.

AI leaders pursue roughly half as many use cases as laggards while scaling twice as many. That pattern is strange until you look at the deployment footprint. Frontier firms use AI across seven business functions on average; laggards deploy across one or two. But more functions doesn't mean more experiments -- it means more bets that survived the cut. Discipline, not volume.

What the gap is actually about

If the gap isn't about technology, what is it about?

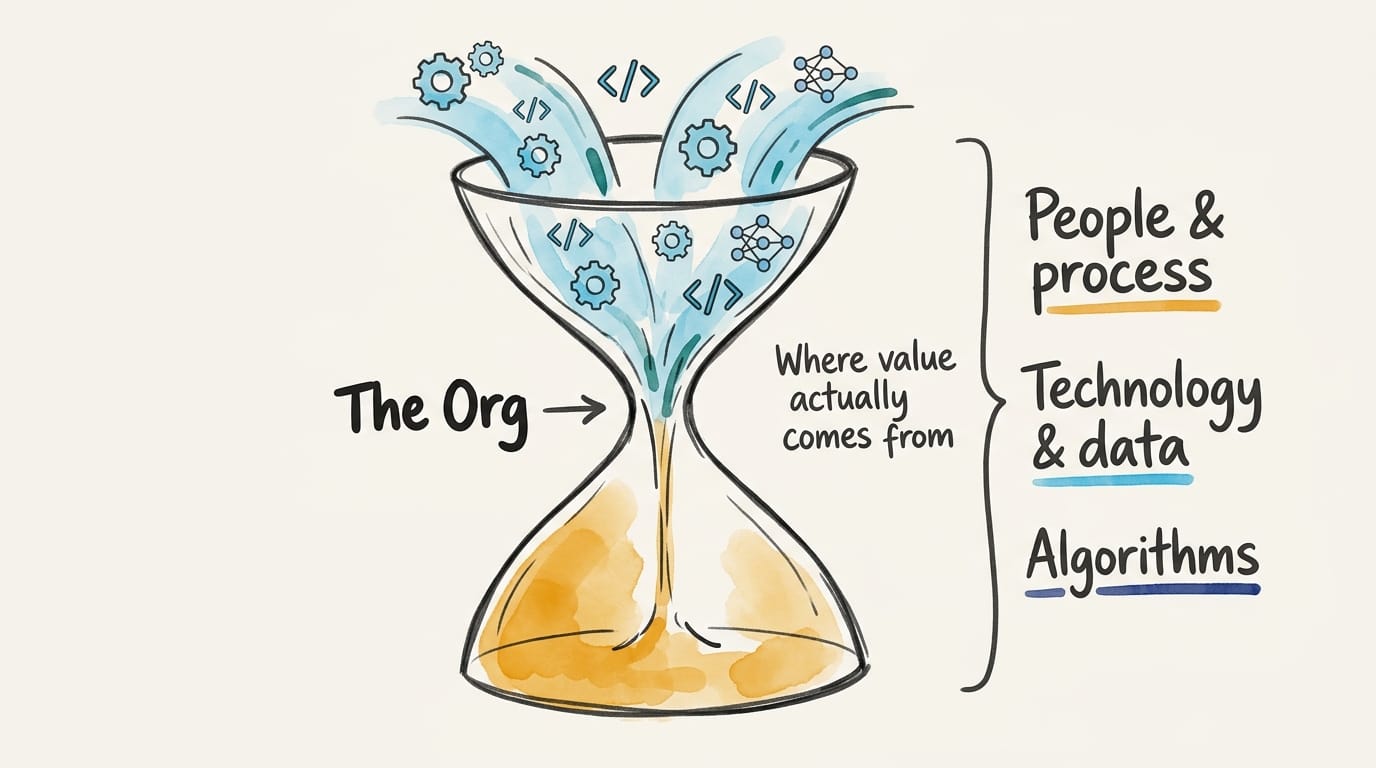

General-purpose technologies require substantial complementary organizational investments before they produce measurable productivity gains -- AI qualifies, and Brynjolfsson, Rock, and Syverson established this pattern in 2017. It's repeated in every major technology transition since. The bottleneck isn't the model. It's the org.

BCG's analysis suggests the vast majority of AI value flows from people and process changes, not technology choices. They express this as a 70-20-10 principle -- 70% people and process, 20% technology and data, 10% algorithms. The specific ratio is an approximation, but the directional claim has broad support: ninety-five percent of generative AI pilots fail, according to MIT's NANDA initiative, after studying 150 executives, 350 employees, and 300 projects. The failures aren't model failures. They're absorption failures -- the organization around the pilot can't integrate what it produces. On the infrastructure side, 68% of AI-first organizations report mature, well-established data and governance frameworks, according to IBM's research. The rest are still building the foundation the technology requires.

The honest caveats -- in one place

A few things this argument doesn't prove.

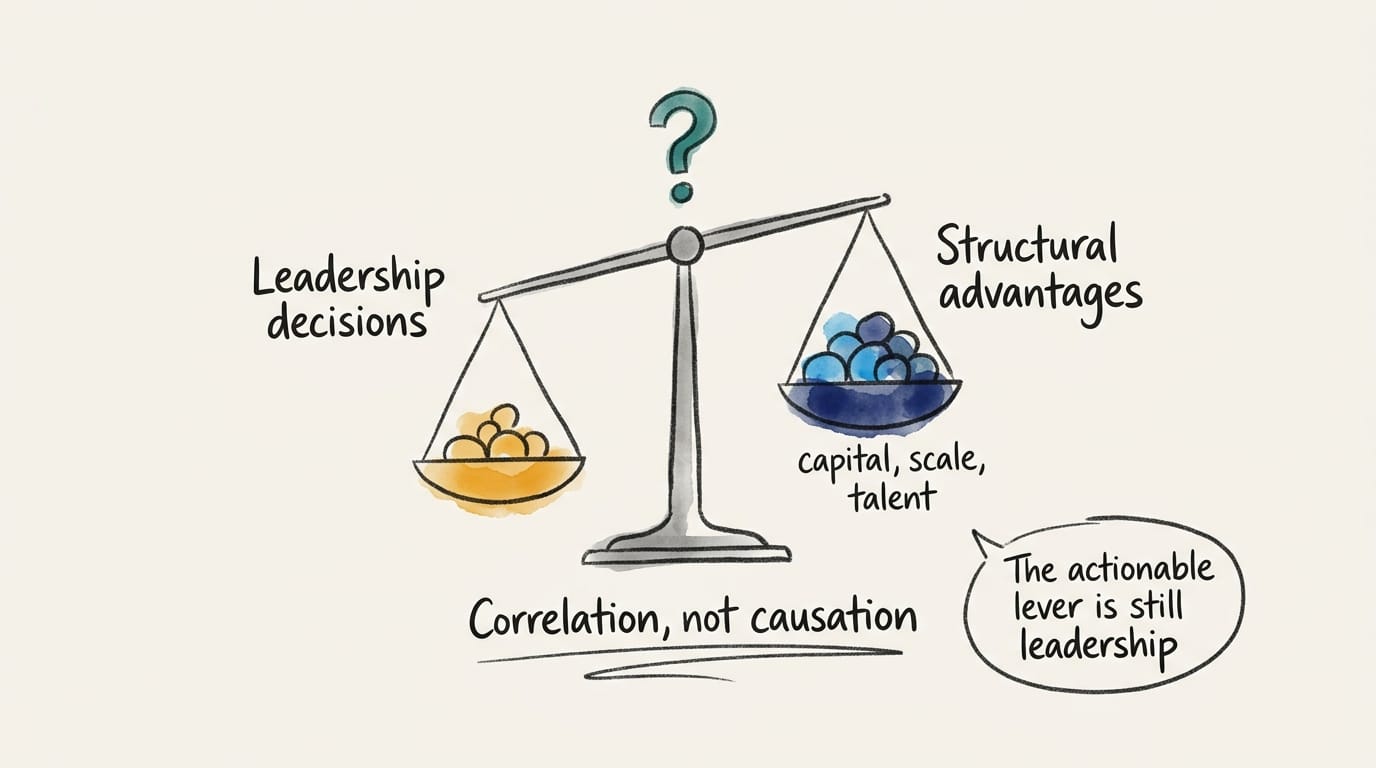

The PwC 74/20 finding correlates organizational behavior with outcomes. It doesn't establish that leadership decisions caused the gap. Retrospective framing is a standard concern with executive surveys -- leaders at successful firms may describe tactical decisions as strategic in hindsight, which inflates the apparent correlation between intent and outcome.

Organizations with more resources -- capital, scale, data moats, deeper talent benches -- can afford both better AI deployment and more sophisticated strategic framing. The comfortable story -- "make better decisions and join the top 20%" -- is probably incomplete. Some of those organizations lead because structural advantages made better decisions possible in the first place.

The compounding timeline -- the claim that the gap is widening and will continue to widen -- comes from practitioner research, not longitudinal panel data. The urgency is real, but the specific trajectory is asserted, not demonstrated. Technology access is commoditizing fast: 76% of enterprises now buy AI capabilities rather than build them, up from 47% in 2024, and 61% of enterprises prefer open-source models. If the gap were primarily about technology, it would be closing.

AI systems trained on aggregated industry data also tend to converge work practices across organizations over time -- a dynamic Endacott and Leonardi (2023) call "endogenous isomorphism." Whether the gap compounds or converges is genuinely uncertain. The series bets on organizational learning speed mattering more than convergence pressure -- but names the bet.

The St. Louis Fed documents only 1.9% excess cumulative productivity growth since ChatGPT's launch. An NBER survey of 6,000 executives found nearly 90% reporting zero productivity impact over three years. The macro evidence doesn't match the firm-level story.

Brynjolfsson's J-curve says gains are coming -- we're in the implementation trough, and the payoff arrives after complementary investments mature. But Gries and Naude (2018) offer a structurally different explanation: AI may boost supply-side productivity while suppressing demand through labor displacement, creating a net-zero macro effect even when firm-level gains are genuine. If that framing holds -- if this is redistribution rather than creation -- then "capture value" carries different ethical weight. The advice is still actionable for the individual firm, but the reader deserves the honest frame.

I'm going to prescribe action anyway, because organizational capability is the lever you actually have. Convergent findings across academic and practitioner research -- Brynjolfsson et al. (2017), BCG, IDC, MIT NANDA, PwC -- point in the same direction on this: organizational factors matter more than technology selection. They converge on direction, not on a specific causal mechanism, and consulting-firm research shares incentive structures that limit true independence. But the signal is consistent enough to act on, even if the causal chain isn't proven. Don't let anyone sell you the uncaveated version.

What this means for you

The question for your organization isn't which AI model to deploy. It's this: Are you using AI to become something different, or to do the same things cheaper? Most leaders start with the second. The ones in PwC's top 20% didn't stay there.

Pull up your AI strategy document. If the first section covers model selection or vendor evaluation, you're answering the wrong question. The organizations in PwC's top 20% aren't asking "which AI?" -- they're asking what markets, revenue streams, and business models AI makes possible. If you don't have an AI strategy document yet, that itself is the finding.

What's your pilot-to-scaled ratio? Count them. If you've got fifteen active pilots and one scaled deployment, that's a collection of experiments, not a portfolio -- and experiments don't compound. BCG found that leaders pursue fewer use cases than laggards but scale more of them. The discipline to kill pilots is as important as the judgment to launch them.

Who in your organization is accountable for AI outcomes -- not AI adoption? There's a difference. Accountability for adoption means someone owns the rollout. Accountability for outcomes means someone is on the hook if the revenue doesn't materialize. If you can't name that person in ten seconds, the organizational infrastructure for value capture doesn't exist yet.

This is piece one of eight.

The divide is about leadership. The series explains why.